The Governance Structures that will Regulate the Future

Dominance of the global AI Robotics market will not only determine who is exporting the lion’s share of software programs or machinery but also which regulatory system, culture, and economic structure are exported along with them. The type of government structures on the world stage are vastly different in their values and construction, which make the pathways for regulatory updates necessary for robotic adoption incredibly varied across the globe. To follow, let alone influence, these updates requires an understanding of the structures and cultures of the leading governments in AI policy.

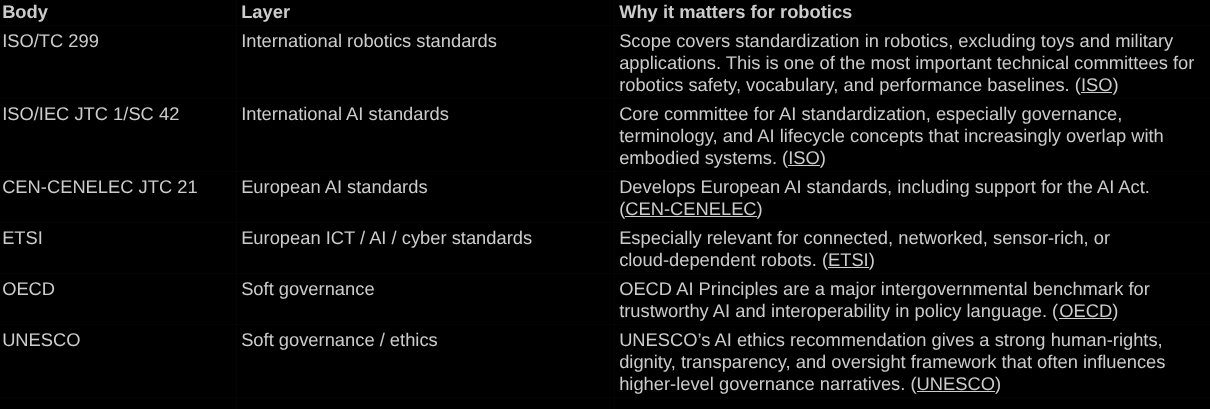

There is no unified global model for how to govern AI and robotics. Instead, three major power centers are beginning to shape the field in very different ways: China, the European Union, and the United States. Each is responding to the same technological transformation, but each is doing so in a way that reflects its own political culture, economic priorities, regulatory structures, and broader worldview for the future.

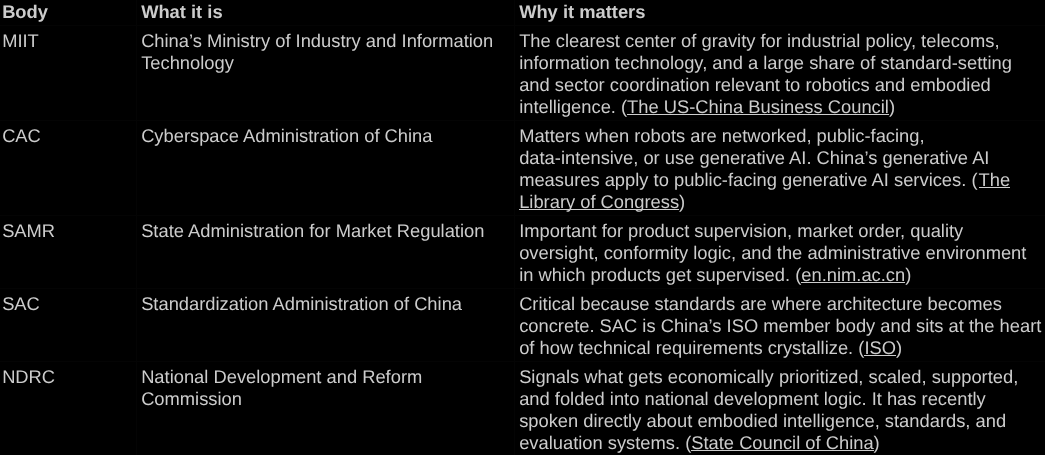

China’s approach is the most infrastructural. It tends to treat robotics as something that should be organized through coordinated systems: industrial planning, standards, rollout frameworks, and a unified national direction with close industry and government coordination. The instinct is to build the surrounding structure that robots will eventually plug into. This reflects a governance culture that is comfortable with strict adherence to centralized planning and long-range industrial strategy for the good of the Nation as a whole.

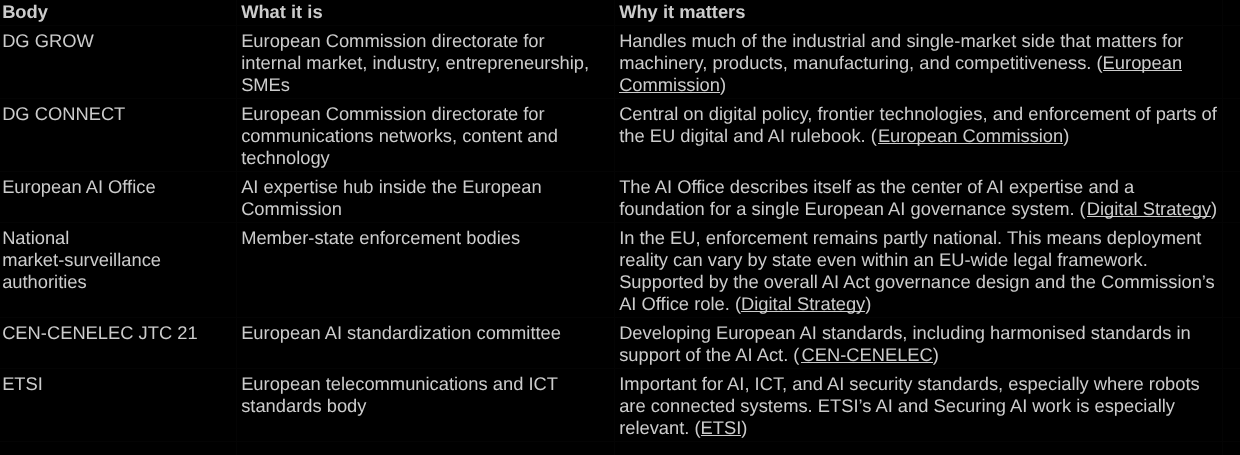

The European Union approaches robotics completely differently. Its instinct is to regulate first: to define rights, obligations, standards, documentation requirements, and legal pathways before large-scale deployment becomes normal. This reflects a political culture shaped by human-first policy, precaution, public accountability, and the careful balancing of innovation with social protection. In the EU, the future is built through a maze of legal architecture.

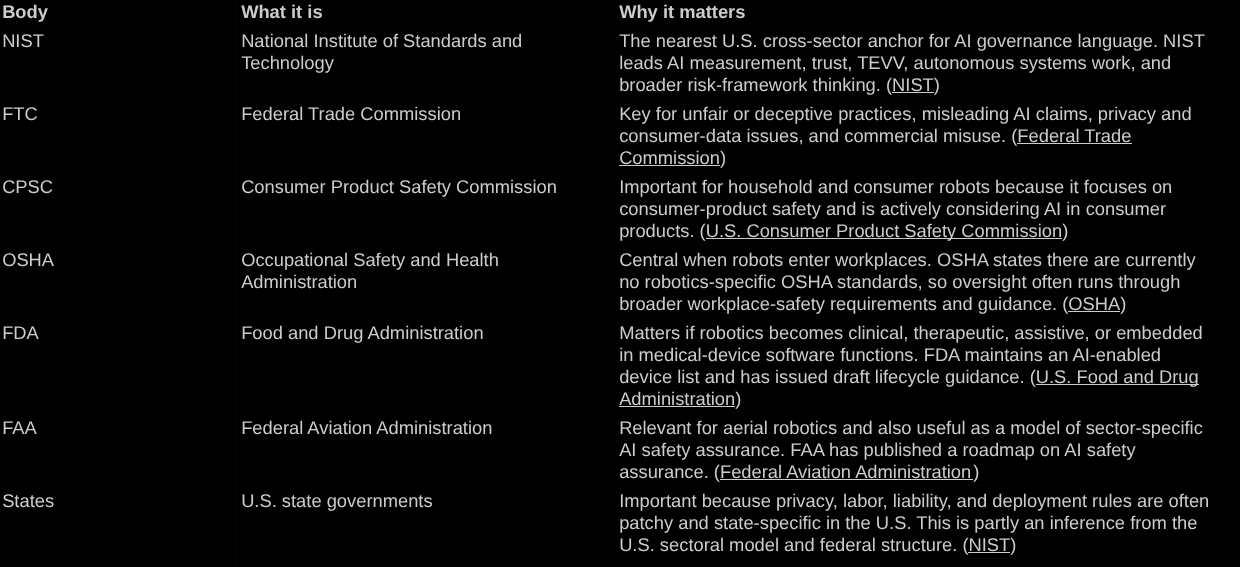

The United States is also distinct from the others. Rather than building one unified framework, it tends to let governance emerge through existing agencies, sector-specific oversight committees, technical standards, and enforcement of existing relevant laws. A robot may be treated one way if it is in the home, another if it is in a hospital, and another if it is in the workplace or public space. This reflects a more market-driven, decentralized, and adaptive model, where governance often follows innovation rather than preceding it.

These differences matter because the AI race is not only about who builds the best model, the smartest software, or the most capable humanoid. It is also about which surrounding system will become normalized globally. The winner will not be simply exporting technology. They will also inherently export a certain level of assumptions embedded within it: how it is regulated, how it is identified, how much it is monitored, who is responsible for it, and what kind of relationship between state, market, and citizen it presumes.

Each government may, of course, regulate its own domain as it seems fit. However, AI and robotics are not culturally neutral. They carry with them traces of the systems that produced them. A robot developed in a heavily centralized environment may arrive with very different assumptions than one developed in a fragmented, market-led one. A regulatory model built around rights and documentation will shape adoption differently than one built around speed, industrial coordination, economic incentive or post hoc enforcement.

That is what makes tracking, let alone influencing, AI and robotics regulation so difficult. The challenge is not only that the field is moving quickly. It is that it is moving in multiple directions at once with incredible variation on how the governmental structures of the biggest axis of power are constructed. Governance is dispersed globally across competing blocs, and regionally across ministries, agencies, standards bodies, and market actors. Anyone trying to shape or follow policy in this space is not dealing with one debate, but many overlapping debates unfolding simultaneously.

This creates an inherent tension. AI is a global market, but regulation remains deeply local, regional, and political. Countries want competitiveness, security, innovation, and control, yet the technologies themselves travel across borders. The result is a world in which robotics governance is becoming a contest not just over capability, but over values, administrative style, and economic philosophy. To follow this field seriously, then, requires more than watching product launches or model releases. It requires paying attention to the regulatory ecosystems forming the regulation around them and recognizing that what is being built is not just the future of AI, but competing visions of social order around it that may get exported globally alongside the embodied AI we are racing to produce.